Introducing the New Redesigned Site Auditor

Fresh off the heels of our release of Google Search Console integration, we’re back with yet another new product update. Today we’re excited to unveil our new completely redesigned Site Auditor. It’s got more data, more charts, more options, and a much faster crawler.

First up, we’ve re-written our crawler to be much faster than before. That means less waiting for new campaigns to be crawled. Depending on current demand and the speed of the site’s server, smaller sites (~500 URLs) can now be finished in as little as 5 minutes, and larger sites (~10,000 URLs) can be finished in as little 30!

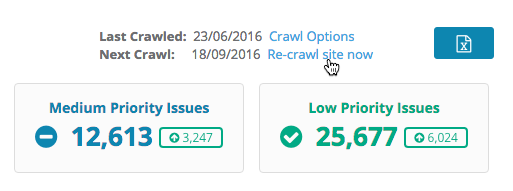

We’ll even give you more control over how fast we crawl. At the top of the Site Auditor page is a link for Crawl Options, where you can choose to crawl slower (to lessen the load on your servers) or faster (to finish crawls as soon as possible).

One of the most frequent requests our support team receives is from users that just made updates to their site. The next crawl is scheduled for a few days later, but wouldn’t it be nice to re-crawl the site now?

Thankfully, now you can! In addition to the bi-weekly automatic crawls, you can now initiate up to 2 crawls a month on demand! Just click “Re-crawl site now” to begin the crawl, and it should be complete in a few hours.

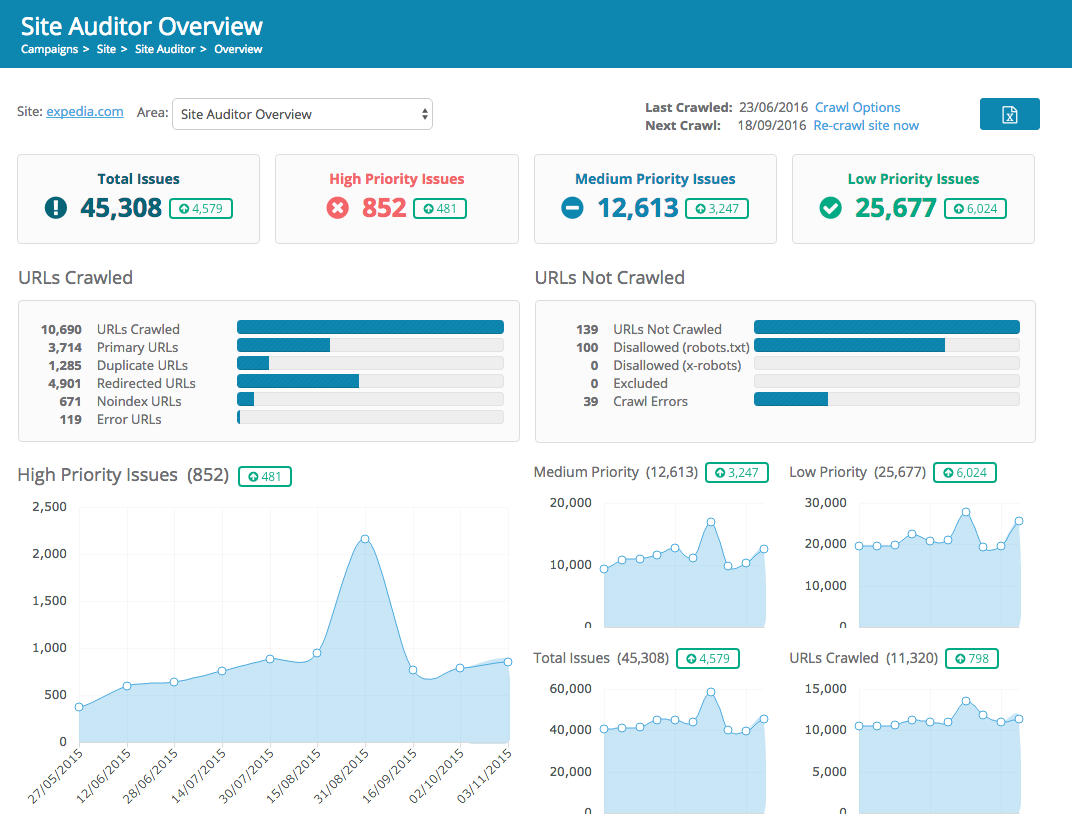

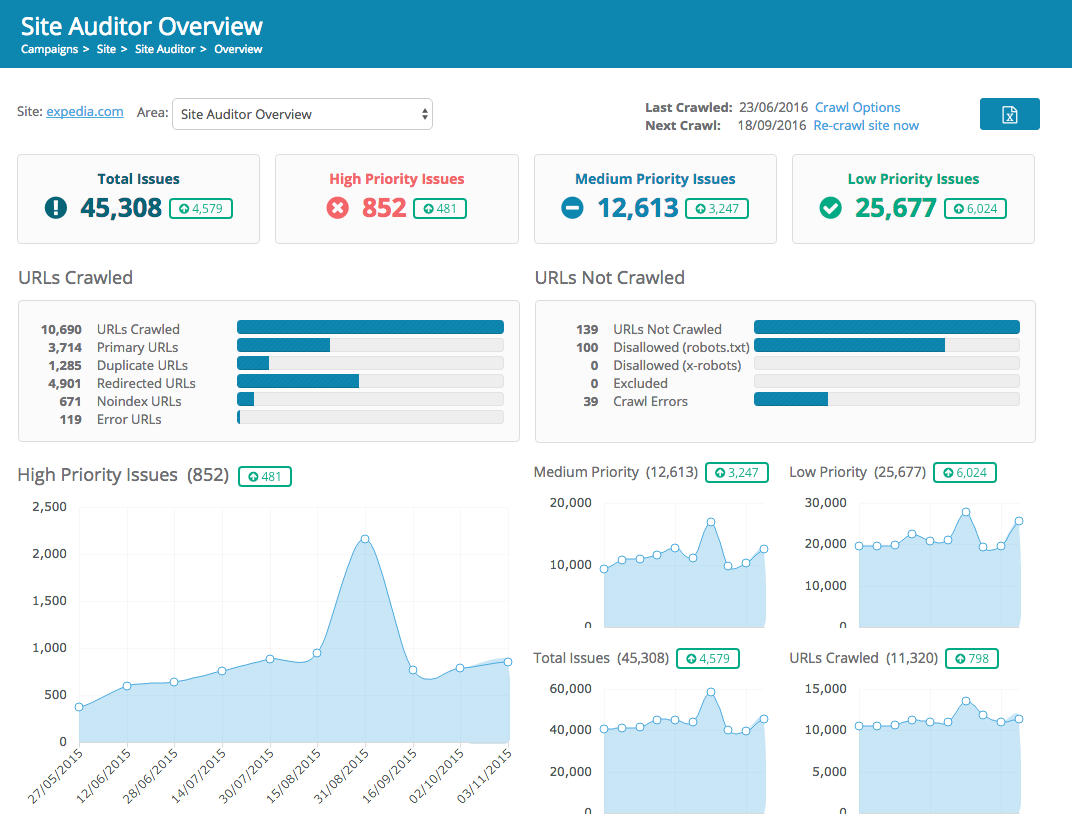

The biggest change you’ll notice will be the design of the overview page. It’s been streamlined to provide more data, presented in a more prioritized way.

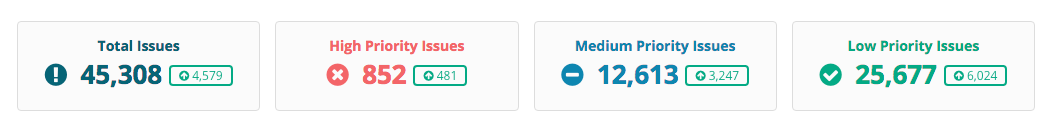

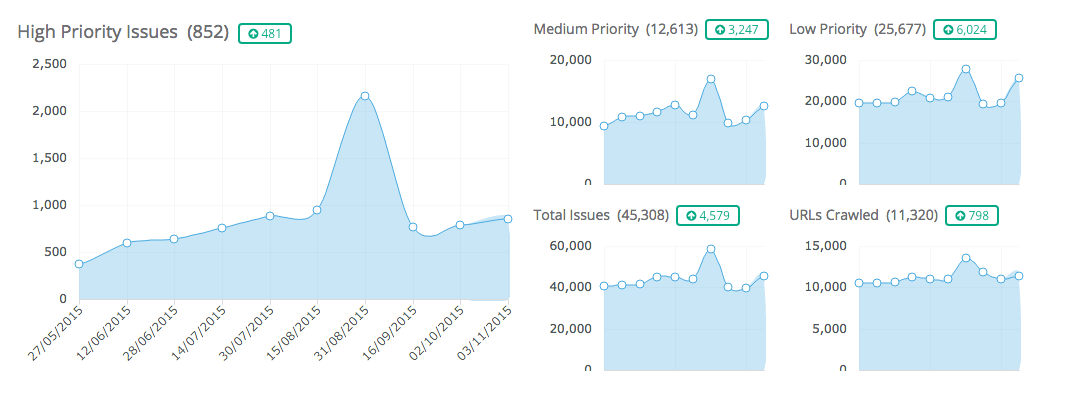

The top of the page shows a quick highlight of how many issues are affecting your site.

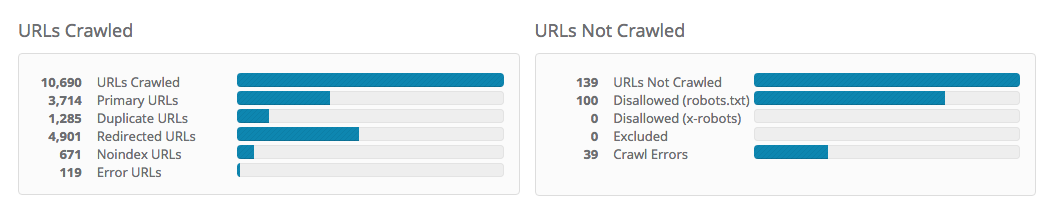

Below this is a summary of the crawl results, showing which general category each URL falls into.

A URL will be considered “not crawled” if we came across it, but did not actually crawl the content on the page. This could be for several reasons — it was disallowed in the robots.txt or x-robots tag, it was manually excluded from the crawl by the user, or we encountered an error receiving the response from the server. The chart displays how many of each our crawler encountered.

All other remaining URLs will be crawled. These fall into one of 5 categories:

Next up is a trend of high, medium, low, and total issues, along with the number of URLs crawled.

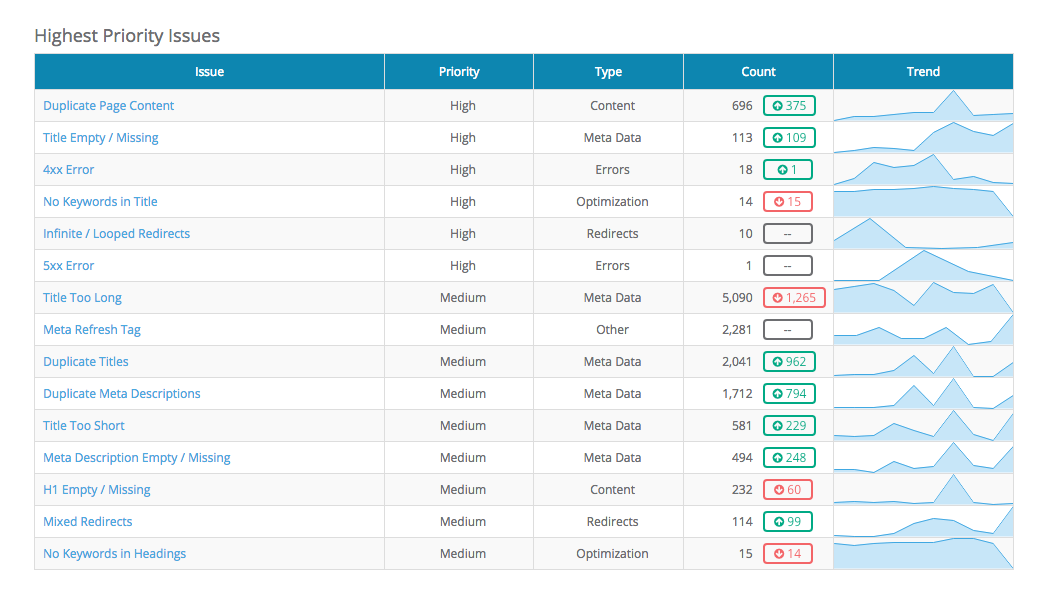

Here you’ll find a list of the highest priority issues, sorted by severity and prevalence found on your site. Each issue will show the priority, type of issue, number of URLs affected, and a trend from previous crawls.

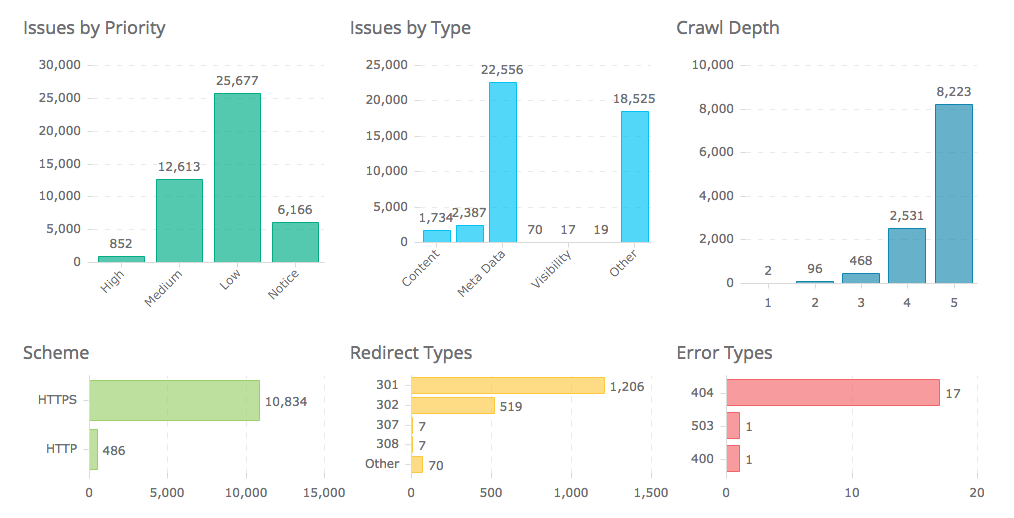

Below the table are a collection of charts to help you understand your crawl even more fully. The first two charts display issues grouped by both priority and type. The next one shows you the distribution of pages at each crawl depth (the number of steps away from the home page). Charts for scheme (HTTP vs. HTTPS), and breakdowns of redirect and error types found on the site follow.

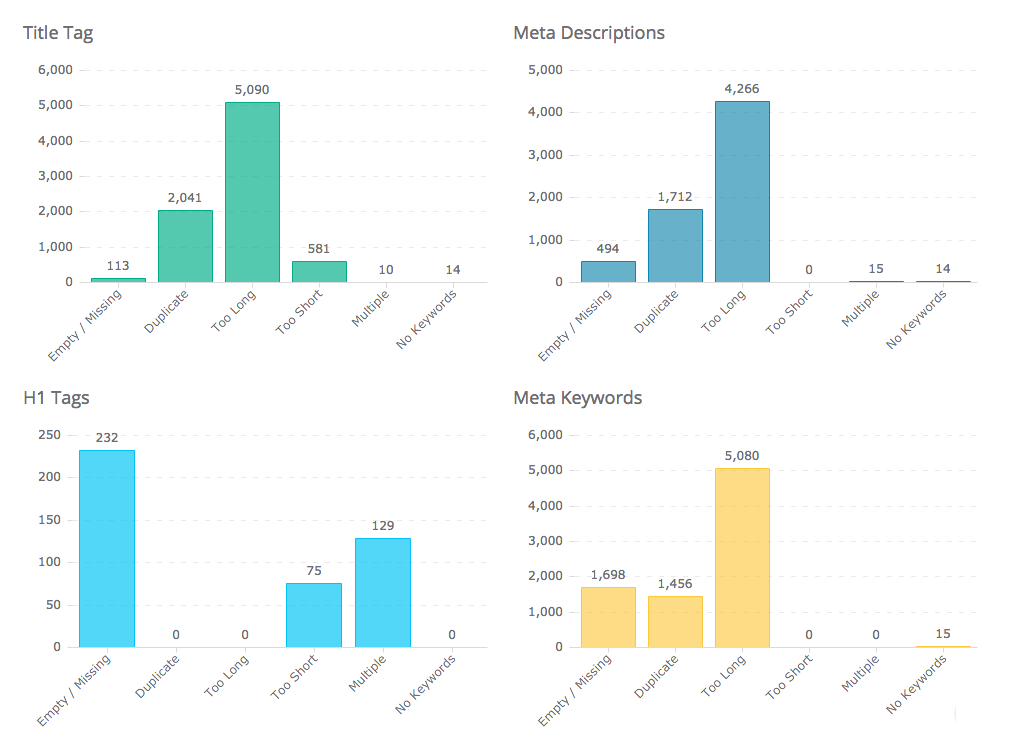

At the bottom of the page is an optimization summary for title, description, h1’s, and meta keyword tags. Use these charts to quickly understand which issues exist for each area.

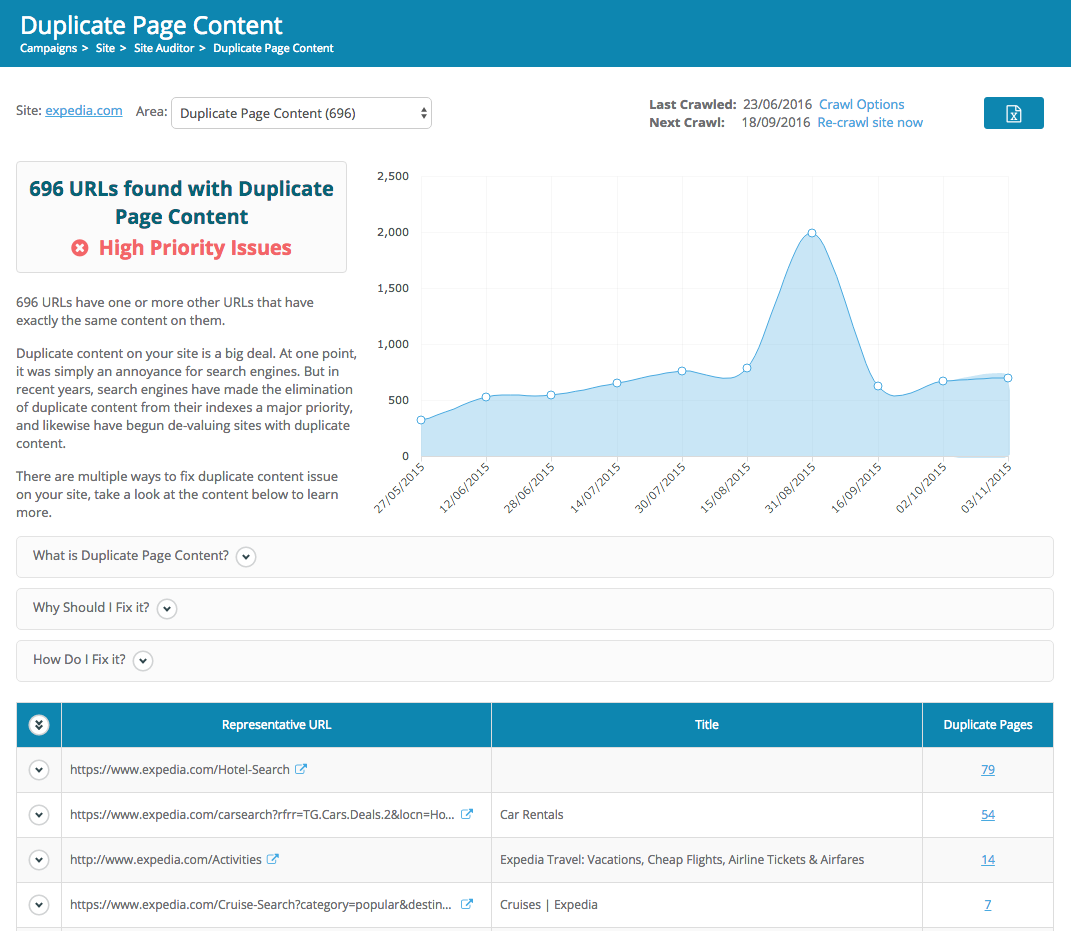

The issue details has remained mostly the same, with mainly just some design updates. One small improvement is that the “Help with this issue” is gone, replaced with more obvious text on the page, labeled “What is this issue”, “Why should I fix it?”, and “How Do I fix it?”.

We’d love to hear what you think of the new Site Auditor. Just use the in-app chat to send us your feedback. Looking forward to hearing from you!